Leveraging Generative AI to Transform Software Testing

Are you a tech leader trying to improve software quality and release faster? Does testing seem like a bottleneck? This blog article discusses how cutting-edge generative AI can help. AI that generates test data, scenarios, and surroundings would greatly enhance coverage, speed, and defect detection.

We’ll simplify by showing how leading companies are using deep learning and fuzzing to transform testing. Starting generative testing in your setup is also covered. New vendor solutions can also improve your journey. Read on to learn how to effortlessly integrate generative AI into your workflows for faster software development, higher quality, and happier customers.

Tackling Testing Challenges Head-On

Tackling Testing Challenges Head-On

High-quality software requires comprehensive testing. Unfortunately, resource constraints and quality testing sometimes let faults into production. Customers may be unhappy, revenue lost due to disruptions, and your reputation damaged. Despite test automation, critical gaps remain:

Manual Test Creation: Creating tests manually consumes a lot of valuable engineering time.

Test Data Shortage: It’s tough to simulate real-world conditions when you don’t have enough test data.

Happy Path Limitations: Tests that follow the ideal path often fail to uncover potential edge cases.

Performance Testing: Replicating production loads for performance testing can be challenging.

Feedback Delays: Slow feedback loops between defect detection and remediation can lead to delays in fixes.

Supercharging Testing with Generative AI

Generative AI uses techniques like deep learning and fuzzing to automatically create test data, scenarios, and simulations. This provides immense benefits across the software development lifecycle.

🔹Generate valid edge cases to uncover defects early in development.

🔹Create challenging and realistic test data to find issues before release

🔹Continuously expand test coverage through automated exploration.

🔹Adapt tests to match production usage and load scenarios.

🔹Instantly detect anomalies and get feedback on defects in real-time.

🔹Reduce maintenance costs by auto-updating outdated test scripts.

In summary, generative AI testing delivers:

🟩Endless supply of test data to boost coverage

🟩Rapid creation of tests that model real user behaviors

🟩Simulation of production environments and usage

🟩Faster feedback loops through instant anomaly alerts

🟩Ongoing, automated test space exploration

💡By harnessing Generative AI, teams can achieve unprecedented test coverage, defect detection, and productivity gains across the development lifecycle. Testing becomes a source of innovation rather than a bottleneck.

Real organizations are already reaping the benefits:

✔️A financial services firm found 150% more security flaws using generative fuzzing compared to manual testing.

✔️A healthcare provider boosted unit test coverage from 62% to 97% with generative data.

✔️A retailer reduced performance testing costs by a whopping 75% with generative load simulation.

Wider Applicability for Both Manual and Automated Testing

Generative AI can bolster testing efforts regardless of the testing stage your organization is at. For manual testing environments, it can accelerate test case creation, expand coverage beyond human-created cases, and facilitate faster feedback loops by rapidly generating test scenarios.

On the other hand, in automated testing settings, it complements existing efforts by generating additional test cases, adapting tests as the application evolves, and aiding in exploratory and performance testing. In essence, whether you’re still reliant on manual testing or have embraced automation, Generative AI can elevate the quality and efficiency of your testing processes.

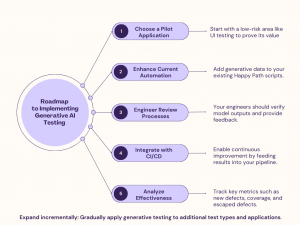

Your Roadmap to Getting Started

Implementing Generative AI testing can be phased in effectively:

Potential Challenges and Limitations:

🔺Generative testing complements traditional testing, but human oversight is still required.

🔺Bias in training data can lead to blind spots if not validated properly.

🔺Adoption requires investment in tools, training, and data preparation.

🔺Exploring emerging tools and vendor solutions

Paired programming allows experienced developers to analyze and adjust AI-generated tests to ensure quality and relevance. Regular training data audits, diversified data sourcing, and fair data production can reduce AI bias. Starting with trial projects to demonstrate value and leveraging open-source technologies to lower costs helps balance the initial investment in tools and training.

Leading technology vendors offer various augmented testing solutions:

Google’s Tensor Fuzz

https://github.com/brain-research/tensorfuzz

Microsoft’s IntelliTest

https://docs.microsoft.com/en-us/visualstudio/test/intellitest-manual/getting-started?view=vs-2022

Applitools Eyes

Tricentis Tosca

https://www.tricentis.com/products/tosca/

Open-Source options :

For customers inclined towards leveraging open-source tools to implement generative AI in their testing processes, there are several options to consider:

✔️TensorFlow and PyTorch offer robust platforms for creating generative models that can simulate test data and scenarios.

✔️AFL (American Fuzzy Lop) provides security-focused fuzzing, while Boofuzz is an updated fork of the Sulley Fuzzing Framework, ideal for network protocol fuzzing. For property-based testing, the Hypothesis library in Python enables the generation of complex test data, and for Haskell developers.

✔️QuickCheck automates test case generation, inspiring similar tools in other programming languages.

By combining these vendor solutions with your in-house expertise, you can pilot Generative AI testing and achieve rapid improvements.

The time to harness the power of GenAI and transform your quality assurance is right now. Don’t let testing hold you back when AI can take your testing efforts to the next level.

Contact us at sales@valueglobal.net